Lessons Learned Part 11: Storage

This article is part 11 of a series of articles exploring the Lessons Learned Life Cycle.

Knowledge is the small part of ignorance that we arrange and classify. – Ambrose Bierce

If you’ve been following this series on Lessons Learned (start here at the beginning if you haven’t), then you’ve learned how to identify, capture, validate, and determine the scope of your lessons. But now you need to store them somewhere, and this is where many lessons learned systems falter. It’s why this is Part 11 of a series rather than Part 2.

Many people think that to have a lessons learned database we just need to (1) write up some lessons, (2) store them somewhere, and (3) tell others “be sure to check the lessons learned database.” Well, it’s true that this will be a lessons learned database, but not necessarily an effective one.

To have an effective system, you need to make it as easy as possible for others to find the lessons most relevant and applicable to the work they are doing at the time. This means that the lesson as written needs not only good content but good metadata (data about data). And the content itself can be structured and broken down into fields that make it easier to identify and group similar lessons.

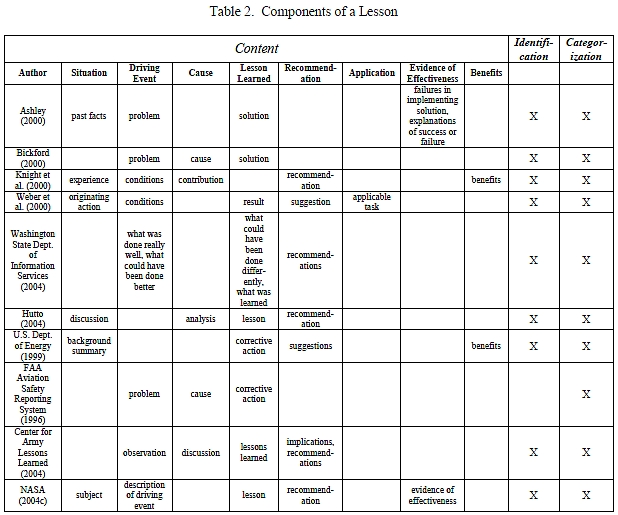

In the table below, I looked at ten different examples of lessons learned systems in order to see if there were common patterns across them (references are at the bottom of this post). Some of these were research papers where academic authors studied these systems, while others were write-ups by lessons learned database administrators describing their own systems. It’s important to note that none of the cases studied has all of the components listed. Most likely your own database will not have all of them either, but you should at least think about them when designing your system in order to make sure it is as robust as possible.

Looking across the top, you will see that the table is broken into three large categories. Content is everything central to the lesson itself, in other words the body of the lesson. Identification and Categorization are the metadata. All instances I studied (with one exception) had these two types of metadata but I didn’t break them out into more detail because they tend to be highly customized to each particular application. Identification is the information required to separate one lesson from another, and usually consists of a lesson identification number, author, approval, submission or creation date, etc. Categorization includes the taxonomic structure, key words, tags, and labels that enhance the user’s ability to find that lesson.

The components of the Content section could be considered as fields in a database record. As I mentioned above, not every system has every content element. Most elements are relatively self-explanatory, so instead of listing definitions for each field I’ll provide a couple of examples that would illustrate a lesson with all components, one negative and one positive. The reason I call attention to positive vs. negative lessons is that you will notice that some organizations view the driving event that created the lesson negatively (“problem”), while others see it in more neutral terms (“condition,” “observation,” “description,” etc.). It’s typically a good idea to stick with neutral terms in order to capture positive lessons as well as negative ones. Unless it is well understood by everyone involved that the system is intended to capture only problems and their solutions, lessons learned system designers may find themselves unintentionally limiting their pool of potential lessons if they use negative terminology.

Example 1 (negative)

An airplane takes off during a snowstorm and crashes because there was ice on the wings.

- Situation: Pilot preparing for and executing a take-off

- Driving Event: Snow and sub-freezing temperatures

- Cause: Ice on the wings

- Lesson Learned: Ice on the wings can affect the lift and stability of an airplane during take-off

- Recommendation: Check the wings for ice and remove any prior to take-off

- Application: Any time an airplane is preparing to take off in wet, sub-freezing conditions

- Evidence of Effectiveness: Airplanes where ice has been removed from the wings have fewer take-off problems than airplanes in the same situation where ice has not been removed

- Benefits: Reduction in loss of life and property

Example 2 (positive)

You are a geometry teacher, teaching your class using PowerPoint slides. One day your laptop crashes so you have to use the whiteboard, but you discover to your surprise that the students actually learn better because you are building your diagrams and graphs a piece at a time and because the material stays visible longer, enabling better note taking.

- Situation: Teaching a math course

- Driving Event: Using the whiteboard instead of PowerPoint for the lecture

- Cause: Laptop crash

- Lesson Learned: Teaching by writing on the board can be more effective than by using slides

- Recommendation: Use the whiteboard when lecturing instead of PowerPoint

- Application: Any time when teaching a class that involves the understanding of complex diagrams, charts, or graphs

- Evidence of Effectiveness: Students do better on tests and homework when using this method

- Benefits: Improved understanding of the concepts of the course

In addition to the positive/negative characterization of lessons, I want to call attention to a few other aspects that jumped out when I put the table together. First is that Evidence of Effectiveness is an important consideration, yet not included in most of the systems studied. A problem might have been found, solution implemented, and recommendation made, all without making a causal link between the problem and solution. In cases where a solution is tried and the problem then goes away, without any evidence of effectiveness the recommendation is subject to being based on pure circumstance. This can lead to a corporate mythology where actions are expected to be taken that have no bearing on this problem but may in fact cause others.

It was also interesting that only two authors specifically mention capturing benefits, and only one of those (Weber) includes what she calls an “applicable task.” It may be that for the others the benefits are obvious and encompassed by the solution, so a separate field may be redundant. And having an Application field seems like a reasonable idea as a way of pointing the user to areas where the lesson might be best applied, but this could also be done in alternate ways such as through extensive categorization.

Among the lessons learned systems listed, the U.S. Federal Aviation Administration (FAA)’s Aviation Safety Reporting System (ASRS) is a special case, but one worth talking about. Almost all lessons learned databases contain information about those who submitted the lessons. This is important because it enables users to contact them to gain more information and better understanding about their experience. But the FAA, because it is gathering information on near-miss “incidents” that might be embarrassing, takes the opposite approach and goes out of its way to preserve anonymity in order to increase the likelihood of reporting. It created this atmosphere of trust through three design actions:

- It separated “incidents” from actual accidents. Any accidents, where participants must be identified, are reported to the National Safety Transportation Board. The ASRS system is only for incidents where mistakes or issues occurred but no harm or damage was done.

- Although the goal of the ASRS is to capture lessons related to aviation safety, it is managed by NASA for the FAA. NASA compiles the data so that the FAA never sees information that could potentially identify a submitter.

- The FAA has created specific regulations (Section 91.25) that prohibit it from using information found in submissions to penalize the submitter for violations of FAA regulations.

As a result, the FAA gets thousands of submissions every month. This is exactly what’s necessary because the agency is not interested as much in specific individual lessons as it is in uncovering and addressing broad patterns of behavior or system design that might pose safety hazards.

It is important to note that identification, categorization, problem, solution, recommendation, and evidence of effectiveness can all be seen not just as fields in a repository but as process steps. A typical organization probably will not obtain all of the information at once, but may want to share part of it before the entire collection process is complete. For example, in the case of a lesson that might avert a catastrophe or save lives, it may be critical to share information about the problems that can arise from the situation even before a solution is found.

In addition, the problem, solution, and recommendation could conceivably come from different people. Important problems might be assigned to be worked until solutions are found. Therefore a robust lessons learned system should include not just a database or repository but a workflow capability that will allow incomplete lessons to be identified, and pieces of the lesson to be posted over time as they are learned. Workflow is also essential in managing the validation process, where approvals often my be required.

So now that you have your lessons stored, how do you let people know about them? In the next article (part 12), we’ll discuss dissemination.

Next part (part 12): Dissemination.

Article source: Lessons Learned Part 11: Storage.

Header image source: Wikimedia Commons, CC BY 2.0.

References and further reading:

- Kevin Ashley, “Applying Textual Case-Based Reasoning and Information Extraction in Lessons Learned Systems”

- John Bickford, “Sharing Lessons Learned in the Department of Energy”

- Center for Army Lessons Learned, “Lessons Learned Submission Form”

- Rod Hutto, “Program Benchmark – WSRC”

- Chris Knight and David Aha, “A Common Knowledge Framework and Lessons Learned Module”

- FAA Aviation Safety Reporting System (ASRS), “Incident Report Form”

- U.S. Department of Energy: “DOE Standard: The DOE Corporate Lessons Learned Program”

- Washington State Department of Information Services, “Review Lessons Learned”

- Rosina Weber et al: “Categorizing Intelligent Lessons Learned Systems”

One of the problems getting “proof of effectiveness” is that the organisational cost of doing that step, is often greater than the total cost of the all the Actions to mitigate and correct the issue.

And it only becomes an issue in most organisations if the problem re-occurs.

I often get asked about recording repeat lessons.

My answer is YES.

Right, there are always trade-offs in capturing evidence of effectiveness, just as there are trade-offs even in deciding what is worth capturing as a lesson in the first place. I would say that generally speaking, the larger the consequences the more important evidence of effectiveness is. That’s why you see it listed in NASA’s process. If knowing whether a lesson is effective is the difference between people living and dying or keeps billion dollar spacecraft from crashing into Mars, probably worth the effort to capture.