Optimization and Complexity: Goodness of Fit [Systems thinking & modelling series]

This is part 60 of a series of articles featuring the book Beyond Connecting the Dots, Modeling for Meaningful Results.

The first step in using historical data to calibrate the model parameters is to understand what is meant by “the best fit” between historical and simulated data. Conceptually, the idea of a “good fit” seems obvious. A good fit is one where the historical and simulated results are very close together (a perfect fit is when they are the same, but that is generally more than we can hope for). However, putting a precise mathematical definition on the concept is not trivial.

Many commonly used ‘goodness of fit’ measures exist; some key measures are listed below.

Squared Error

Squared error is probably the most widely used1. To calculate the squared error we carry out the following procedure. For each time period we determine the difference between the historical data value and the simulated value, and square that difference. We then determine the sum of these differences to obtain the total error for the fit. Higher totals indicate worse fits, and lower totals indicate better fits.

The following equation could be placed in a variable to calculate the squared error between a primitive named Simulated and one named Historical:

([Simulated]-[Historical])^2

Please note that maximizing the R2 measure we described earlier is equivalent to minimizing the squared error.

Absolute Value Error

A characteristic of squared error is that outliers have high penalties compared to other data points. Outliers are points in time where the fit is unusually bad. Since the squared error metric squares the differences between simulated and historical data, large differences can cause even larger errors when they are squared. This can sometimes be a negative feature of squared error if you do not want the outliers to have special prominence and weight in the analysis.

An alternative to squared error that treats all types of differences the same is the absolute value error. Here, the absolute value of the difference between the simulated and historical data series is taken. The following equation could be placed in a variable to calculate the absolute value error between a primitive named Simulated and one named Historical:

Abs([Simulated]-[Historical])

Other Approaches

Many other techniques are available for measuring error or assessing goodness of fit. Most statistical approaches function by specifying a full probability model for the data and then taking the goodness of fit not as a measure of error, but rather as the likelihood of observing the results we saw given the parameter values2. To be clear, the issue of optimizing parameter values for models is one that is more complex than what we have presented here. Many sources of error exist in time series, and analyzing them is a very complex, statistical challenge. The basic techniques we have presented are, however, useful tools that serve as gateways toward further analytical work.

| Exercise 9-1 |

|---|

| You have a model simulating the number of widgets produced at a factory. The model contains a stock, Widgets, containing the simulated number of widgets produced. You also have a converter, Historical Production, containing historical data on how many widgets were produced in the past.

Write two equations. One to calculate squared error for the model’s simulation of historical production, and one to calculate the absolute value error of the same. |

| Exercise 9-2 |

|---|

| You like the idea of penalizing outliers in your optimizations. In fact, you like this idea so much that you would like to penalize outliers even more than squared error does. Create an equation to calculate error that penalizes outliers more than squared error. |

| Exercise 9-3 |

|---|

| Describe why this is not a valid equation to calculate error:

[Simulated]-[Historical] |

Next edition: Optimization and Complexity: Multi-Objective Optimizations.

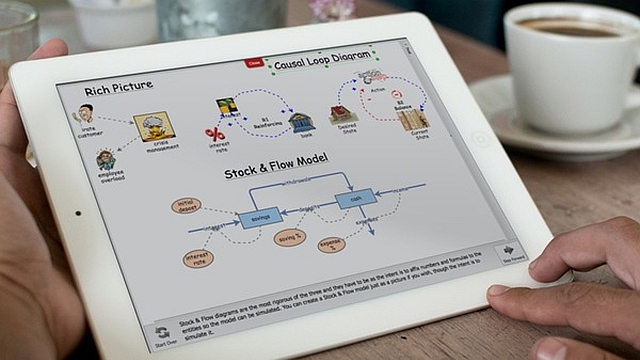

Article sources: Beyond Connecting the Dots, Insight Maker. Reproduced by permission.

Notes:

- The main reason is that regular linear regression (ordinary least squares, the most widely used modeling tool) uses squared error as its measure of goodness of fit. Doing so simplifies the mathematics of the regression problem greatly in the linear case. ↩

- Likelihood is a technical statistical term. It can be roughly thought of as equivalent to “probability”, though it is not precisely that. ↩