Building Confidence in Models: Model Implementation [Systems thinking & modelling series]

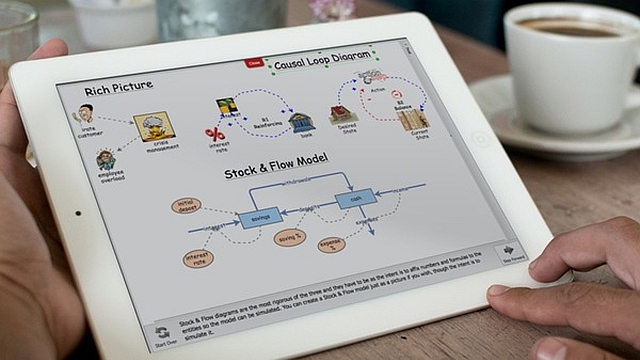

This is part 41 of a series of articles featuring the book Beyond Connecting the Dots, Modeling for Meaningful Results.

Although not as much a lightning rod as model design, significant errors can occur during implementation of a model specification. Bugs introduced into a model through programming mistakes or mistyped equations can be hard to identify later. This is a particular problem in black-box models but it is still an important point to consider for all types of models, including those presented here. Fortunately, a number of steps can be taken to ensure the model is implemented correctly.

Primitive Constraints

There will be natural constraints for many of the primitives in the model. For instance, a stock representing the volume of water in a lake can never fall below 0. Similarly, if a variable represents the probability of an event occurring, it must be between 0 and 1.

Often these constraints are implicit without being formally specified in the model. A modeler may not think to specify water volume since its volme can never become negative. However, the existence of these constraints provides an opportunity to implement a level of automatic model checking. By specifying that a primitive can never go above or below a value (using the Max Value and Min Value properties in Insight Maker), you can create in effect a ‘canary in the coal mine’ to warn if something is wrong in the model. If these constraints are violated, an error message can appear,letting you know that you need to correct some aspect of your model.

This concept of constraints in models is similar to the concept of “contracts”, which are supported in some programming languages. These contracts define and constrain the interaction among different parts of the program, causing an error to be generated if the contract is violated. The Eiffel programming language probably has the best support for this approach to development.

Unit Specification

When we introduced units in the previous chapter, we showed that they could be a useful tool in constructing models. Units can also be used to ensure that equations are entered correctly. If you fully specify the units in a model, many types of equation errors will result in invalid units, which will create an immediate error. By employing units in your model you can automatically detect an entire class of errors and mistyped equations.

Regression Tests

Tests other than those specified above can be developed. For instance, once the proper behavior of a model is determined, the modeler can create automated tests to periodically confirm the model’s performance. This is a common practice in software engineering that we would like to see more of in model development. Insight Maker itself has a suite of more than 1,000 individual regression tests that automatically test every aspect of its simulation engine.

It is important that regression testing be automated. It is not enough to examine a portion of the model, determine it is currently working correctly, and leave it at that. The problem is that future changes may break the existing functionality (i.e., a “regression”, the introduction of an error or reduced quality compared to an earlier version of the model). Especially for complex models, a change in one part of the model may have an unexpected effect in another part. By implementing a set of automatic checks, you can protect your model against unintended changes and regressions.

| Exercise 5-1 |

|---|

| You have a variable representing the total population size of a small city. What constraints might you place on this variable? |

A Second Pair of Eyes

This is not to say that spot checks and point-in-time checks are not worthwhile. It can be very useful to have a second modeler review your models and cross-check the equations. This helps to check for simple mistakes and critique the fundamental structure and choices of the model.

The gold standard in verifying that a model is implemented correctly according to specification is to have a second modeler completely reimplement the model according to that specification. Such reimplementation should ideally occur without access to the original model’s code base to ensure that the second modeler does not simply copy bugs from the original model into the reimplementation. If the results from the two implementations concur, that is strong evidence that the model has been implemented correctly. Although potentially an expensive exercise, it will also most likely identify numerous ambiguities in the specification, which could be valuable in and of itself.

Next edition: Building Confidence in Models: Model Results.

Article sources: Beyond Connecting the Dots, Insight Maker. Reproduced by permission.

Header image source: Beyond Connecting the Dots.