Global convergence emerges around five ethical principles for AI, but with divergence on detail

Artificial intelligence (AI) is developing rapidly, and while it is already bringing benefits, warnings have been sounded for some time in regard to potential risks and drawbacks. Those expressing strong concerns have included high-profile science and technology sector leaders Stephen Hawking, Elon Musk, and Bill Gates, with Hawking and Musk being among the many signatories to the 2015 open letter on Research Priorities for Robust and Beneficial Artificial Intelligence. The letter stated that “Because of the great potential of AI, it is important to research how to reap its benefits while avoiding potential pitfalls.”

With AI expected to play an important role in knowledge management (KM), concerns in regard to the potential negatives of AI have also been raised within the KM community. For example, Sue Feldman discusses a range of ethical issues in regard to AI in an article in the current issue of KMWorld magazine, alerting that “Questions of privacy, bullying, meddling with elections, and hacking of corporate and public systems abound.” In another example, Euan Semple alerts to ethical issues in regard to digital technology generally, stating that “Just because we can do amazing things with our new tools doesn’t mean that they all necessarily make the world a better place. We can use them for good or ill.”

In response to the widespread concerns, numerous international and national organisations have established expert committees that have produced ethical guidance documents on AI. However, there’s been much debate about what constitutes ethical AI and what requirements, standards, and practices are needed to bring it about.

A newly published systematic review1 by researchers at the Swiss Federal Institute of Technology in Zurich sets out to investigate if global agreement in regard to these issues might be starting to emerge.

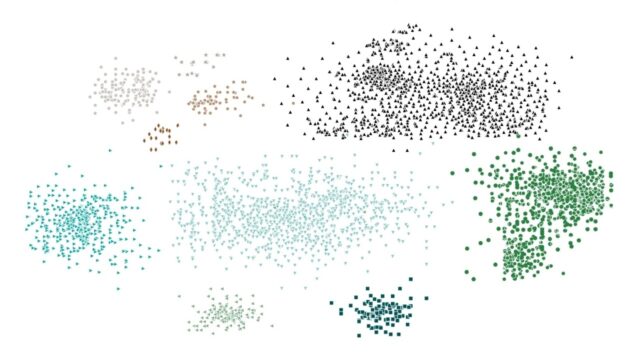

The researchers conducted a search for relevant documents using a protocol adapted from the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) framework. The search included grey literature. Screening was carried out using inclusion / exclusion criteria, including that the documents needed to have been written in English, German, French, Italian or Greek. A total of 84 documents containing ethical principles or guidelines for AI were identified.

The analysis revealed eleven overarching AI ethical values and principles, which are, in order of the frequency of the number of sources in which they were featured, starting with the largest number:

- transparency

- justice and fairness

- responsibility

- privacy

- beneficence

- freedom and autonomy

- trust

- dignity

- sustainability

- solidarity.

An emerging convergence was found around the following five principles:

- transparency

- justice and fairness

- non-maleficence

- responsibility

- privacy.

However, significant divergence was also found in regard to how the eleven ethical principles are interpreted, why they are considered important, exactly what they apply to, and how they should be implemented.

The researchers acknowledge a number of limitations in the review. These include that bias may have arisen because of what is involved in analysing non-academic literature compared to the peer-reviewed literature that is normally the focus of systematic reviews, and that the language criteria may have biased the results towards English language documents.

One notable AI ethics document was also not included in the systematic review because it was published after the researchers had completed their analysis. This is the Beijing AI Principles document, which has been published by a group of leading institutes and companies in China, where there is considerable and growing investment in AI development. The Beijing AI Principles appear to be consistent with the eleven principles identified in the systematic review.

Header image source: Gerd Altmann on Pixabay, Public Domain.

Reference:

- Jobin, A., Ienca, M., & Vayena, E. (2019). The global landscape of AI ethics guidelines. Nature Machine Intelligence, 1-11. ↩

Also published on Medium.