Optimization and Complexity: Multi-Objective Optimizations [Systems thinking & modelling series]

This is part 61 of a series of articles featuring the book Beyond Connecting the Dots, Modeling for Meaningful Results.

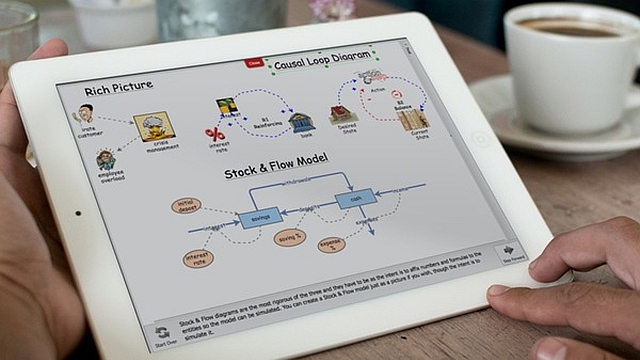

So far our examples have focused on optimizing parameter values for a single population of animals. But what if we had two or more populations?

Imagine we were simulating two interacting populations of animals such as the hamsters and their food source, the Hippo Toads. If we had historical data on both the toads and the hamsters, we would want to choose parameter values that result in the best fit both between the simulated and historical hamster populations, and the simulated and historical toad populations. This is often quite difficult to achieve, as optimizing the fit for one population will often result in non-optimal fits for the second population.

A straightforward way to try to optimize both populations at once is to make our overall error the sum of the errors for the hamsters and the errors for the toads. For instance, if we had two historical data converters, one for the toads and hamsters, and two stocks, one for each population the following equation would combine the absolute value errors for both populations.

Abs([Simulated Hamsters]-[Historical Hamsters]) + Abs([Simulated Toads]-[Historical Toads])

Simply summing the values can create issues in practice. Let us imagine that the toad population is generally 10 times as large as the hamster population. If this were the case, the error predicting the toads might be much larger than the error predicting the hamsters, thus the optimizer will be forced to focus on optimizing the toad predictions to the detriment of the accuracy of the hamster predictions.

One way to attempt to address this issue is to use the percent error instead of the error magnitude. For example:

Abs([Simulated Hamsters]-[Historical Hamsters])/[Historical Hamsters] + Abs([Simulated Toads]-[Historical Toads])/[Historical Toads]

The percent error metric will be more resilient to differences in scales between the different populations. However, it will run into issues if either historical population becomes 0 in size or becomes very small.

Another wrinkle with multi-objective optimizations is that one objective may be more important than the other objectives. For instance, let’s imagine our toad and hamster populations were roughly the same size so we do not have to worry about scaling. However, in this case we care much more about correctly predicting the hamsters than we do the toads. The whole point of the model is to estimate the hamster population, so we want to make that as accurate as possible, but we would still like to do well predicting the toads if we are able to.

You can tackle issues like these by “weighting” the different objectives in your aggregate error function. This is most simply done by multiplying the different objectives by a quantity, indicating their relative importance. For instance, if we thought getting the hamsters right was about twice as important as getting the toads right, we could use something like:

2*Abs([Simulated Hamsters]-[Historical Hamsters]) + Abs([Simulated Toads]-[Historical Toads])

This makes one unit of error in the hamster population simulation count just as much as two units of error for the toad population simulation.1

| Exercise 9-4 |

|---|

| Why does the percent error equation have issues when the historical data become very small? What happens when the historical data becomes 0? |

Next edition: Optimization and Complexity: Finding the Best Fit.

Article sources: Beyond Connecting the Dots, Insight Maker. Reproduced by permission.

Notes:

- Weighting is a useful technique you can use for other optimization tasks. Imagine you had a model simulating the growth of your business in the next 20 years. You want to use this model to adjust your strategy to achieve three objectives: maximizing revenue, maximizing profit, and maximizing company size. Potentially maximizing profit would be the most important objective, with maximizing company size being the least important. You can use weights to combine these three criteria into a single criterion for use by the optimizer. ↩