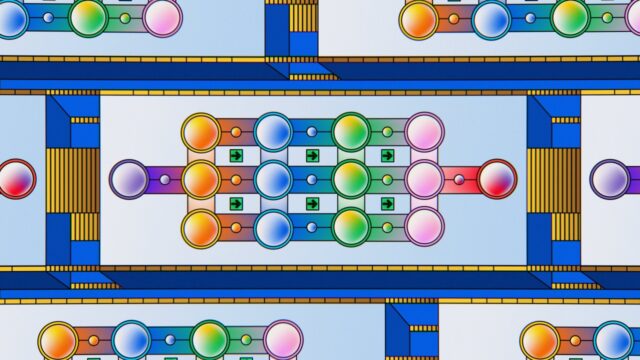

Google MultiModel: a potentially significant advance for artificial intelligence (AI)

Deep learning has seen great success across many fields, for example in speech recognition, image classification, and translation. However, the design and tuning effort needs to be repeated for each new task, limiting the impact of deep learning. The current approach is also very different from the general nature of the human brain, which can learn many different tasks and benefits from transfer learning.

In response, a newly published Google study1 asks, “Can we create a unified deep learning model to solve tasks across multiple domains?”

A step towards positively answering this question has been taken by introducing the “MultiModel” architecture, a single deep-learning model that can simultaneously learn multiple tasks from various domains. Specifically, MultiModel was built using TensorFlow and trained simultaneously across eight domains, being ImageNet, multiple translation tasks, image captioning, speech recognition, and English parsing.

The results were as follows:

- MultiModel learns all of the tasks and achieves good performance. This performance is not state-of-the-art at present, but is above many task-specific models studied in the recent past. The model is expected to come closer to state-of-the-art with more tuning.

- Two key insights are crucial to making MultiModel work, and are the main contributions of the study: (1) small modality-specific sub-networks convert into a unified representation and back from it, and (2) computational blocks of different kinds are crucial for good results on various problems. (To allow training on input data of widely different sizes and dimensions, such as images, sound waves and text, sub-networks are needed to convert inputs into a joint representation space.)

- Adding computational blocks doesn’t hurt performance, even on tasks they were not designed for. In fact, both attention and mixture-of-experts layers slightly improve performance of MultiModel on ImageNet, the task that needs them the least.

- The MultiModel performs similarly to single-model on large tasks, and better, sometimes significantly, on tasks where less data is available, such as parsing.

- Mixing different computation blocks is in fact a good way to improve performance on many various tasks.

- The key to success comes from designing a multi-modal architecture in which as many parameters as possible are shared and from using computational blocks from different domains together.

To enable other people to experiment with the code, it is being made available on the TensorFlow GitHub site.

Article sources: CIO Dive, VentureBeat.

Header image source: Adapted from Google by Carlos Luna, which is licensd by CC BY 2.0.

Reference:

- Kaiser, L., Gomez, A.N., Shazeer,N., Vaswani, A., Parmar, N., Jones, L., and Uszkoreit, J. (2017). One Model To Learn Them All. arXiv:1706.05137 ↩

Also published on Medium.